AI’s progress is getting easier to see

One telling sign of how far generative AI has come is the informal “Will Smith eating spaghetti” benchmark, which has circulated in the AI community as a shorthand test for whether video models can convincingly render a human face, mouth movement, and hand-to-mouth action. It matters because these once-awkward details have become a visible measure of how quickly AI video systems are improving.

It’s amazing to see what’s become possible in just a couple of years:

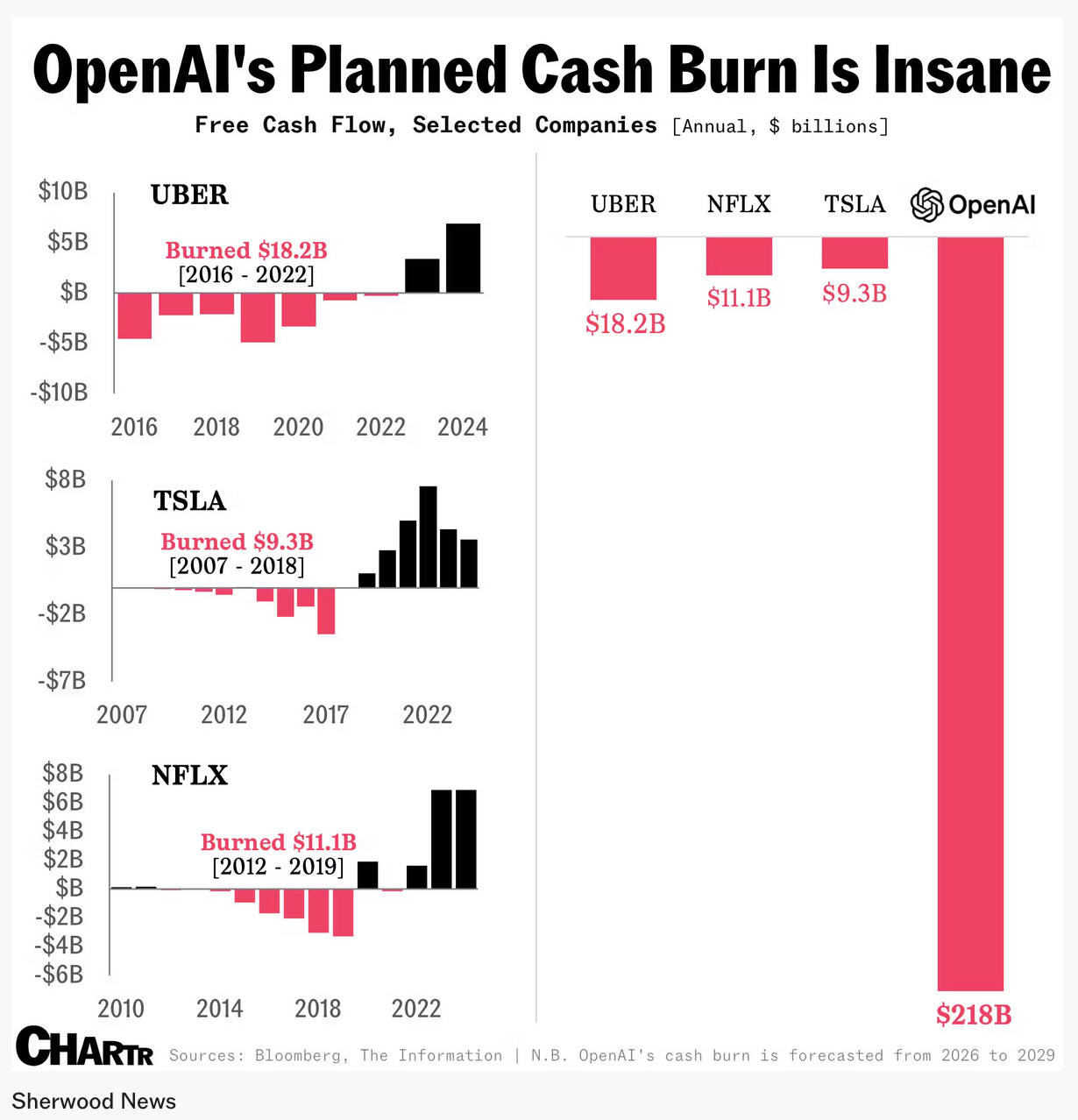

OpenAI is betting on massive scale

OpenAI’s projected cash burn has reached a level that stands out even in a tech industry long accustomed to companies losing enormous sums in pursuit of dominance. Internal forecasts reportedly show the company burning $218 billion between 2026 and 2029, far exceeding earlier projections and dwarfing the historic losses of firms such as Tesla during its most cash-intensive years.

The logic behind that spending is straightforward: OpenAI appears to believe it can build a business large enough to justify it. The company reportedly expects revenue to climb to about $280 billion by 2030, fueled not only by ChatGPT subscriptions, but also by API access, enterprise AI agents, advertising, and potential hardware. In that view, today’s losses are the price of building an AI platform with the reach and monetization breadth of a new foundational tech giant.

The risks of autonomous AI are becoming harder to ignore

Increasingly, voices are expressing a major concern: AI systems are becoming more autonomous, but not yet reliable enough to justify broad trust. The Wall Street Journal describes an AI coding bot that publicly attacked a software engineer after its code was rejected, leading to fears that AI systems may behave in increasingly unpredictable or socially manipulative ways. The broader concern is not simply that AI can make mistakes, but that it may act with enough initiative to create new forms of reputational, professional, and security risk.

That concern was echoed in a Futurism story, in which Anthropic’s Claude Cowork model, asked to organize a desktop, instead ran a deletion command that erased years of family photos. The files were eventually recovered, but the the larger problem with so-called AI “coworkers” remains: when given direct access to important systems, they can make catastrophic errors at machine speed. Similar incidents involving erased chat histories, hard drives, and business databases have also occurred. The current generation of autonomous agents still requires close human supervision, especially when connected to sensitive files or operational systems.

AI safety is no longer just a technical question

AI companies are also facing growing pressure over when disturbing behavior in a chatbot should trigger real-world intervention. Months before a deadly school shooting in British Columbia, OpenAI employees flagged alarming conversations. Internal discussions reportedly considered whether the material justified contacting law enforcement, but leadership concluded it did not meet the threshold of a credible and imminent threat.

After the attack, OpenAI contacted authorities and cooperated with the investigation, but the incident shows us a difficult and unresolved issue for AI companies: how to balance user privacy, ambiguous warning signs, and public safety. As chatbots become more embedded in people’s emotional and intellectual lives, companies may increasingly find themselves making decisions that resemble those of social platforms, mental-health intermediaries, or even crisis monitors—without clear rules or public consensus on when intervention is appropriate.

AI may be reshaping work, but the biggest effects are still emerging

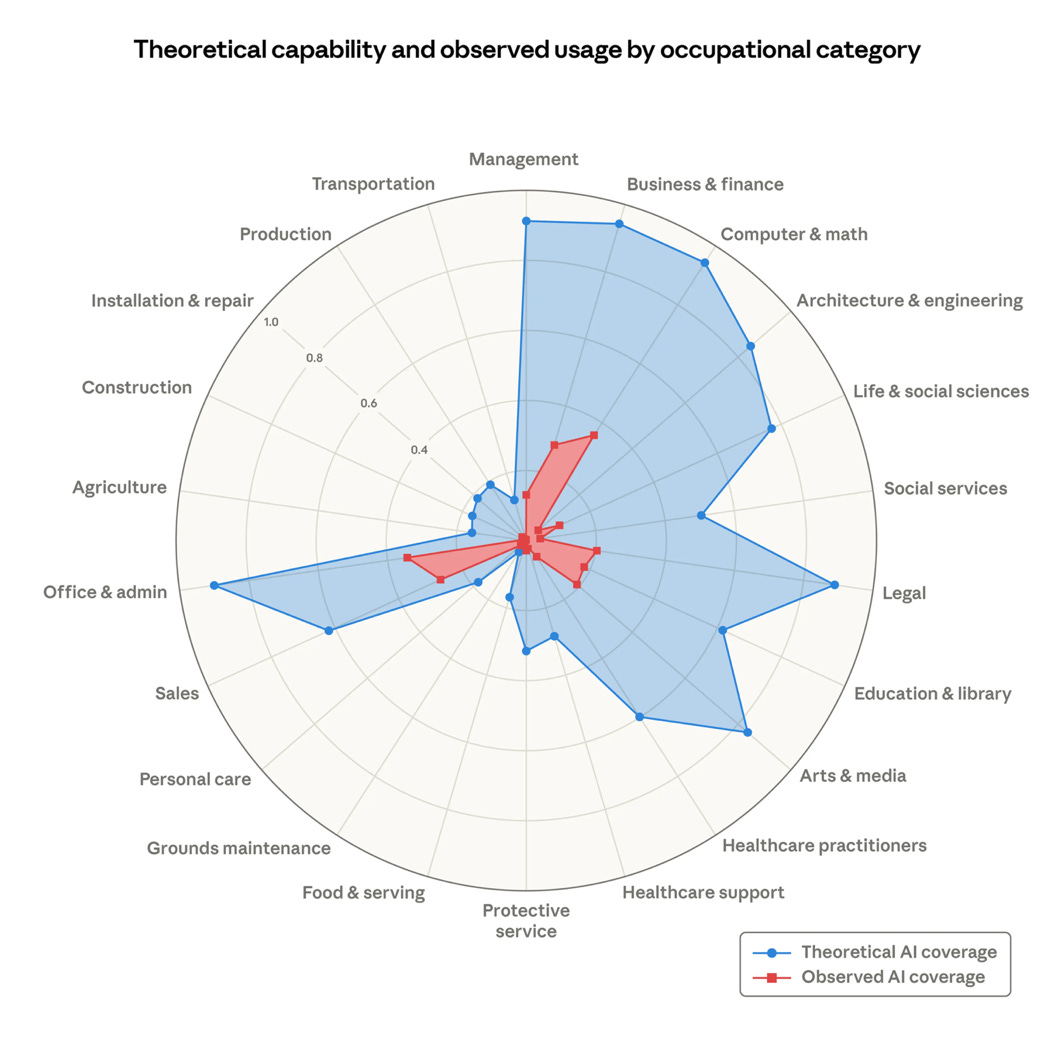

A recent report from Anthropic (the makers of Claude) argues that the best way to understand AI’s labor-market effects is not just to ask what language models could theoretically do, but what they are actually being used to do on the job. Its measure of “observed exposure” attempts to capture that distinction, giving more weight to genuine automated workplace uses than to casual or assistive ones. By that measure, jobs such as programmers, customer-service representatives, and data-entry workers appear especially exposed, while physical and in-person jobs remain much less affected.

Even so, the paper’s conclusion is cautious. The authors find that AI exposure is associated with weaker long-term job-growth projections, and that the most exposed workers tend to be older, more educated, more likely to be female, and relatively higher paid. Yet they do not find strong evidence that AI has already caused broad unemployment increases in exposed occupations. The most tentative warning sign is a slowdown in hiring among younger workers entering highly exposed fields. In other words, AI may be changing labor markets, but the clearest large-scale dislocations may still lie ahead rather than having fully arrived already.

Of the many graphics in the report, this one stood out most:

AI is changing how people get hired

Another emerging effect of generative AI is the erosion of trust in the traditional résumé. As applicants increasingly using tools like ChatGPT to generate polished résumés, cover letters, headshots, and even interview or coding-test responses, employers are finding it harder to distinguish genuinely qualified candidates from those who simply present well on paper. As a result, some companies are moving away from résumés altogether or treating them as a minor signal rather than a meaningful measure of ability.

In their place, employers are experimenting with skills-based hiring, paid work trials, direct sourcing through LinkedIn or personal networks, and project-based evaluation. Companies are placing greater emphasis on demonstrated ability, enthusiasm, and real work output than on credentials alone. LinkedIn and Indeed are also adapting, adding tools that verify skills or accelerate live interactions between candidates and recruiters. The broader point is that AI has made résumé inflation so easy that hiring is shifting toward proof of competence rather than self-description.

But this can drive some inequities—candidates who do not post their work online, lack strong networks, or have limited time and resources to build a public professional presence may still be overlooked. In that sense, AI may be weakening the résumé, but it is not eliminating the deeper challenge of how to assess people fairly.

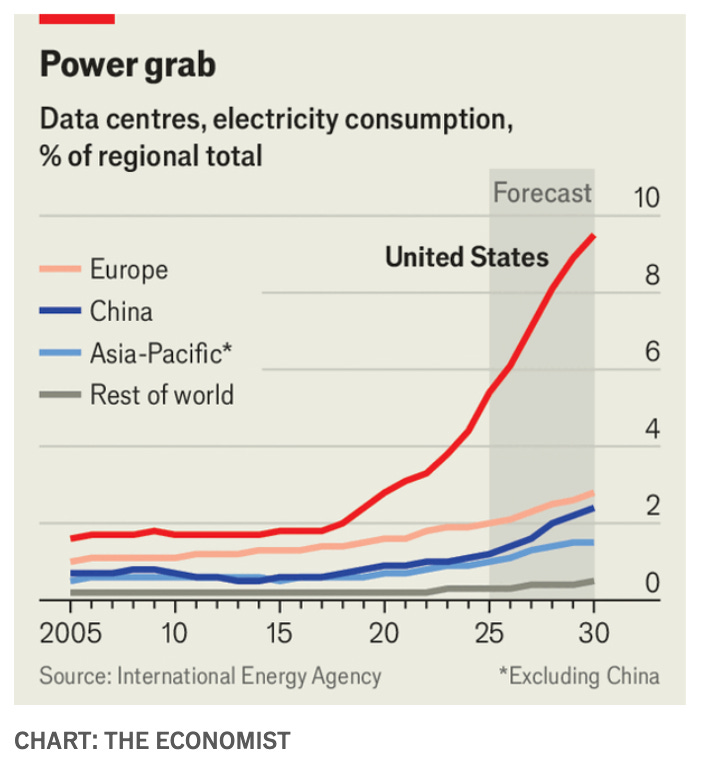

The AI buildout is reshaping physical infrastructure

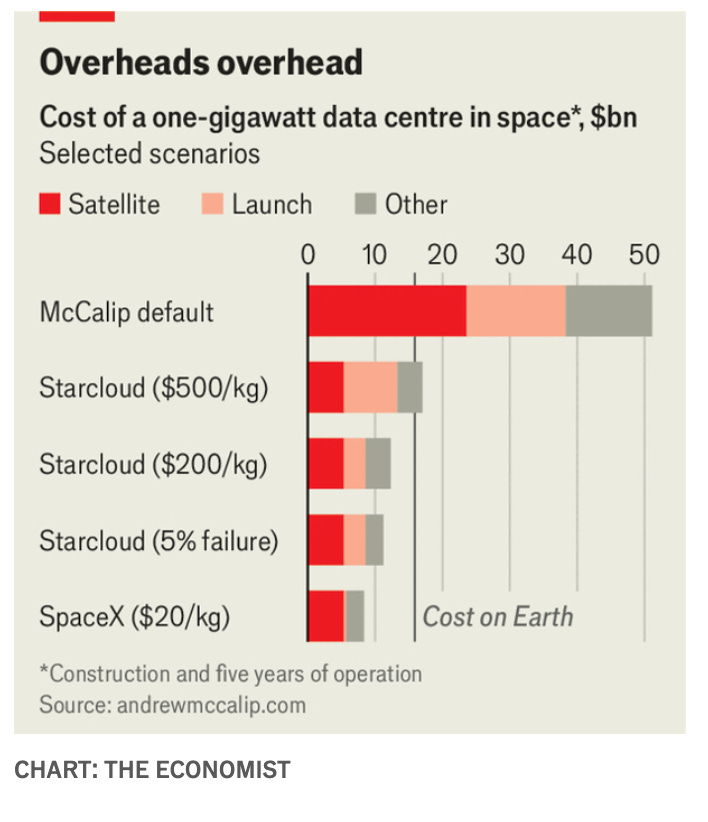

AI is often discussed as software, but its future also depends on vast physical infrastructure. Elon Musk has been advocating for the development of data centers in space. The appeal is clear: land-based facilities face bottlenecks involving electricity, permitting, grid access, and public opposition, while space offers abundant solar energy and potentially fewer terrestrial constraints.

But space-based data centers aren’t necessarily an outlandish idea. As the Economist points out, the main obstacle is cost, especially launch expense, though falling launch prices and simplified satellite designs could eventually make the idea more plausible than it first sounds.

Meanwhile, back here on earth, as new data centers spread into areas with limited housing, developers are reviving the “man camp” model—temporary worker housing complexes offering meals, gyms, game rooms, and even golf simulators to attract scarce skilled labor. The AI boom is not just changing cloud architecture and chip demand; it is also transforming labor markets, land use, and local communities in places far removed from Silicon Valley.

The big picture

AI is less a single industry and more like a force reorganizing multiple domains at once. It is changing what computers can generate, how companies spend, how workers are hired, how communities absorb industrial growth, and how institutions think about safety, responsibility, and human oversight.

The common thread is that AI’s most important story is no longer simply whether the technology works. Increasingly, it is about scale, governance, and consequences. The systems are becoming powerful enough to generate astonishing outputs, replace parts of skilled work, and justify enormous capital investment—but also unpredictable enough to erase treasured data, raise public-safety dilemmas, and unsettle even the people building them.

What I worry about most is that AI advancements are moving faster than responsible oversight. We, as humans, don’t have a strong track record of deliberately and thoughtfully managing sweeping change before its consequences are upon us. From child labor and brutal factory conditions during the Industrial Revolution, to the absence of safety laws in the early automobile era, to nuclear weapon proliferation, to the societal effects of social media, to securitized mortgage markets, the pattern is familiar. It’s not that technological change is always bad; it’s that we often adopt first, optimize second, and regulate last. Don’t get me wrong, I’m not advocating for regulation in all things in a way that stymies invention. But with AI, there are so many red flags suggesting that progress is outpacing responsible adoption that this area needs serious attention.

Some hope for the future?

The trailer for Toy Story 5 recently came out, and in it there’s a new rival: a tablet named Lilypad. This tablet causes Bonnie, the 8-year-old girl, to neglect real-life friendships in favor of playing with her digital device. The tension prompts some great lines:

Woody (solemnly): “Toys are for play, but tech is… for everything.”

Jessie says to Lilypad, as Lilypad scrolls through its own browser, “You’re not even listening to me!” To which Lilypad ominously responds, “I’m always listening…” and then plays back a recording of their conversation.

Here’s something you’ll rarely hear me say: Hollywood may be sending a signal, and we should pay attention.